SQL Server is a big product, with lots of features. Many of the features—hopefully the vast majority of them—are solid and allow us to build trustworthy, secure, and reasonably usable database applications. But nearly everyone has stumbled into a few, shall we say, “darker areas” of the product now and again. Strange and seemingly nonsensical enhancements have come and gone over the years, and we’re all certainly aware of the Microsoft tendency to occasionally introduce amazing-looking but almost unworkable v1 features that never get another glance from the product team prior to their deprecation years later.

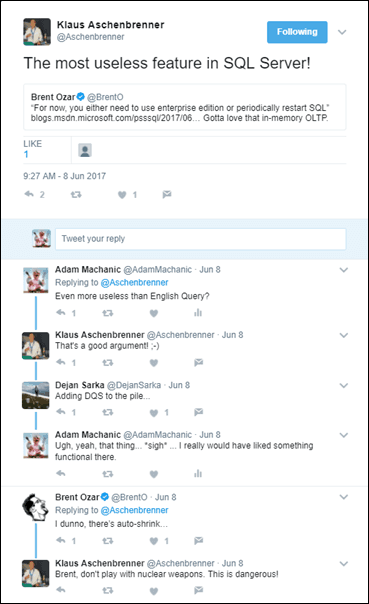

The other day Klaus Aschenbrenner brought this topic to the forefront of my mind (or at least my Twitter stream) with his bold proclamation that the generally lauded “in-memory OLTP” is the “most useless feature in SQL Server.”

The other day Klaus Aschenbrenner brought this topic to the forefront of my mind (or at least my Twitter stream) with his bold proclamation that the generally lauded “in-memory OLTP” is the “most useless feature in SQL Server.”

Personally I have yet to see an especially interesting and non-trivial use case for the feature (and no, user session state management is certainly not it), but most useless? Surely, I thought, that prize has to go instead to English Query? Dejan Sarka quickly chimed in and recommended Data Quality Services. It’s tough to argue with that one, but then Brent Ozar mentioned auto-shrink…

It was at this point that I realized just how many candidates there are for “most useless” things lying around the product. So I decided to create my own tweet. I asked for the most useless feature, anytime between version 7.0 (which I would call the beginning of SQL Server’s “modern era”) and now. I received quite a few suggestions, and so I have decided to catalog them here—along with a bit of personal commentary on each one.

The list that follows is mostly unordered and culled straight from what I received on Twitter. Hopefully I haven’t missed anything due to Twitter’s weird threading and “priority” mechanisms. And please let me know in the comments if your favorite useless feature is missing, or you’d like to add a comment and/or argument about one of these. Perhaps we can find some way to turn these dark and ugly corners into things of beauty? Well, we shall see…

So without further ado, the Most Useless SQL Server Features, per my Twitter followers, as of June 2017!

In-Memory OLTP. The one that started it all. Useless? I guess it depends on where you draw the line between “OLTP” and “memory optimized.” The latter is, I think, demonstrably useful in certain cases. For example, improving the performance of temporary tables. But as for the full-blown OLTP use case, as well as the various native optimized options? Very few databases suffer from the exact types of latch contention for which this feature was created, and there are much better and easier ways to get even better performance gains than are offered by native optimization. This feature seems to be getting continued attention from Microsoft, so it may well prove to be the foundation for some very useful things in coming releases. I am going to reserve judgement for now, whether or not Mr. Aschenbrenner agrees.

English Query. My number one vote. This was an attempt at bringing “natural language” capabilities into the product. I believe it was introduced in SQL Server 7.0. I’ve never talked to anyone who used it in a production environment. Personally I never even installed it. I don’t recall hearing that it was deprecated, but it disappeared at some point, maybe in SQL Server 2005? No one cried. I guarantee it.

Data Quality Services (DQS). This one was so promising on the surface, and something that is absolutely needed in many data warehouse projects. A centralized business rules and data verification platform can really help reduce complexity. But DQS never made it past the initial stage. It was (is?) effectively a collection of valid text strings—and that’s it. No real “rules,” no real workflow. And abysmal performance. Abysmal. An excellent suggestion for this list to be sure.

Master Data Services (MDS). I remember when Microsoft acquired this tool. I was working on a large data warehouse project. We watched an online demo and we’re blown away. We decided right away to use it the moment Microsoft released its version. But then that didn’t happen. For a long, long time. Two years later, as I recall, it finally hit the street. And while I never personally tried it, from what I heard it was a complete disaster of bugs and faulty workflow. I’ve heard that it has gotten marginally better since then, but I think the ship has sailed. Microsoft lost the trust of its end users and this product is forever doomed for inclusion in lists like this one.

Auto-Shrink. “Stop my file from growing and taking over the disk!” Sounds great, but is it? If you understand even a little bit about SQL Server architecture you understand why that file is growing. And sometimes you really do need to shrink a file, but if you look at the internals of shrink you know that it can cause fragmentation. So shrink is something you want to avoid, not automate. Auto-shrink is a feature put into the product for users who don’t want to deal with the product. It can only cause you more problems. Useless? Sure, I’ll go there.

Auto-Close. This option is one that few people know what to do with. It’s there for a reason—it helps large instances, with a tremendous number of databases, make better use of system resources when not all of those databases need to be used at the same time. Most shops shouldn’t turn it on. But useless? I’d say no.

Auto-Grow. When creating a file in SQL Server you have various options. You can pre-allocate as much space as you think you’ll need and hope to fill it up later. Or you can allocate the minimum amount of space and let the file grow itself, or not over time. If you only pre-allocate, you risk not having enough space there when a spike occurs and you need it. If you let your files grow too often, you’ll introduce fragmentation. If you grow your files by large percentages, you can wind up consuming way too much disk space. If you combine the best of all of these—pre-allocating using metrics and allowing for incremental growth as a safety net—you’ll be in pretty good shape. All of which is a long way to say that I have no idea why this feature was suggested; it is, in my book, anything but useless. I think about it and consider its configuration every single time I create a database file. You should, too.

Notification Services (SSNS). This feature was a framework designed to help generate emails, reports, and other forms of “notifications” when data changed certain ways. (Example: “Let me know when the stock price hits $40/share.”) Added sometime in the early 00s, it was more or less doomed to failure from the get go: It was driven by a very confusing XML-based interface, the documentation was beyond horrible, and the book authoring community largely ignored it (with the exception of a few pages of high-level coverage here and there, and a book written by the guy who created the feature for Microsoft). If you had the patience to muddle through the mess (as I did!) it was possible to get the thing running and doing some fairly decent things. But the learning curve was so steep, and the general situation so poor, that Microsoft didn’t even bother keeping v1 around for more than a single release. The plug was pulled and Notification Services was deprecated prior to SQL Server 2008. Probably for the best.

Query Notifications. Entirely unrelated to, but often confused with Notification Services. This is a SQL Server and ADO.NET feature that allows applications to receive events when the results of a SQL Server query might change due to data modifications. This feature was released as part of SQL Server 2005 and would be hugely useful in so many cases except that it has proven itself time and again to be entirely undependable and nearly impossible to debug. And Microsoft has, as far as I can tell, put exactly $0.00 of investment into improving the situation. I am actually getting angry as I type, because there are so very many excellent use cases for this thing that has been left out there in its piece of junk state—but I can’t use it in any but the simplest of scenarios due to the risk factor. Thanks, Microsoft.

Buffer Pool Extension (BPE). A feature that received a lot of attention in SQL Server 2014. Well there wasn’t much else in SQL Server 2014 to give attention to. What exactly is the use case for this thing? It’s not entirely clear, but the combination of a fast local SSD with really slow primary storage isn’t especially common. On Twitter Joey D’Antoni suggested some Azure use case, but I felt like he was pushing for it. Useless? Probably for most of us.

Management Data Warehouse (MDW) / Data Collector. Everyone who does anything serious with SQL Server buys or builds a monitoring tool. That’s not cheap. Microsoft could provide huge value to its users by shipping a bunch of monitoring and collection capabilities with the product! At least, that was the idea back in 2008. MDW is a classic example of a great idea that got rushed into the product as version 1.0 and then never touched again. It is famously unable to be uninstalled once you turn it on. The data collector is famously limited in what it can do unless you exercise various arcane hacks that are only documented on long-dead blogs written by people who no longer work for Microsoft. And the reporting end is almost nonexistent. Why the ball was dropped, I’ll never know. But in the meantime, I don’t plan to bother turning this thing on.

Lightweight Pooling / Fiber Mode. This rather arcane feature was added to help very marginally improve performance of very specific workloads. From Day One it shipped with a warning: Don’t turn this on. It breaks various SQL Server features which, apparently, aren’t needed for that very specific workload. I don’t recall the exact specifics around when or why you’d use this, but I never have. No one I’ve talked to ever has. I’m not even sure it still works, but I won’t be trying it out. I wonder if the handful of customers for whom this was created actually benefitted? If that’s you, let us know!

JSON. The long-awaited follow-up to XML support has finally arrived in SQL Server 2016, and right away someone votes it as most useless feature ever. Tough crowd. Personally I’m on the fence here. I don’t see much reason to store data in a “relational” database in JSON format (or XML for that matter). I do see some very limited use case around rendering data to JSON, but I would question why the app can’t do that? Same on the shredding side. Looking back at the XML features, I have mainly used them to query Dynamic Management Views; and in that case I only did that because I had to, due to the fact that those views expose so much data in XML format! I also heavily use XML for concatenating strings. Not so much actual XML work, though. I assume the same story will hold true for JSON. A few people here and there will use it. Most of us won’t, until Microsoft starts converting all of the system objects over. (Please, no!)

SQL Server Management Studio (SSMS). I live in SSMS all day long. Aside from a few crashes and the occasional performance issue I’ve had no problem with it, ever, and I felt that it was a huge upgrade from the Query Analyzer and Enterprise Manager days. Sorry, whomever suggested SSMS for this list, but I am absolutely a fan. I’ve tried a few third-party management tools and they don’t even begin to come close. SSMS, useless? Not at all.

Connect to SSIS from SQL Server Management Studio. I don’t even know what to say here. I saw this option once or twice, tried it, didn’t see anything that pertained to me, and never really looked into it. Maybe Andy Leonard or some other SSIS specialist can fill us in on the point of this thing. I can’t vote one way or another because I completely lack the context.

DROP DATABASE IF EXISTS. This one was suggested by Greg Low, who explained that it fails if anyone is connected to the database. And disconnecting them first requires checking if the database exists. Well that makes way too much sense. Useless.

Columnsets (and Sparse Columns in general). I remember being really excited when these features shipped, and I couldn’t wait for them to get some enhancements. (I asked for columnset data type information; my request was denied.) For those who don’t recall, Sparse Columns is supposed to be a way to model really wide tables in which most of the attributes are NULL for any given row, and columnsets is a way to bring back some of the sparse data in an XML format. And these would have been interesting features except Sparse Columns requires making a DDL change on the target table, and it’s really confusing as to when you should or should not use it and how much space you’ll save and so most people just stick with the tried-and-true Entity-Attribute-Value approach. And then there is the manageability problem of maintaining a table with 20,000 columns. Yeah, no thank you. Columnsets were sort of just tacked on to the whole thing and maybe if you could create more than one set and name them or something like that it would be cool, but no, you could only have one, and it has some sort of weird limitations that I don’t remember right now because I’ve never looked at it again and don’t plan on it. I’ll mark this entire feature set, sadly, as useless. But it could have been so great. Version 1.0 For Life strikes again.

SQLCLR. If you’ve been following me for any amount of time you know I’m a fan. It has its quirks, it’s still pretty much a v1 thing after 12 years, but I’ve gotten plenty of great use out of it. So has Microsoft. Various SQL Server features leverage it internally. Needs love, but hardly useless from where I sit.

Utility Control Point (UCP). I remember hearing about this and reading up on it when it was released. (2008 R2?) But I can’t say much beyond that. I just looked at the documentation and I’m not entirely sure what this thing is supposed to be. I’m not going to spend any more time on it. Does anyone have a counter argument for why we shouldn’t call this useless?

Raw Partitions. I am not a storage guy, but I guess this can help improve disk I/O if you’re willing to take on some risk. That seems like a bad tradeoff for most databases. ‘nuff said.

Uninstall. I’ve lost count of the number of times I’ve installed SQL Server over the years, but I’ve probably only uninstalled a few. And I can’t say it was ever a simple experience. SQL Server tends to leave fragments all over the place, like many Microsoft products, and uninstall is anything but clean. The best way to do it is to simply pave away. So I have to agree with whomever posted this suggestion on Twitter. Uninstall, you’re pretty useless.

Service Broker (SSB). Ah, Service Broker. I have a real love-hate relationship with this feature. I’ve used it on lots of projects. It works well, when it works. It’s a pain to debug when it doesn’t work. Its architecture is ridiculous and beyond overbaked. (Conversations and dialogs, really? All we want is a simple queue! And the fact that you can create fake conversations to make it go faster? I can’t even…) But when it comes down to it, having some kind of queue in the database is better than not having any kind of queue in the database. It’s solid, transactional, and after a bit of configuration it usually gets the job done.

Not Freeing Allocated Memory Except Under Pressure. SQL Server allocates as much memory as it needs, up to however much you tell it to use. (The memory configuration amount is even referred to as a “target.”) It uses all of that memory to store your data and query plans so that it doesn’t have to re-retrieve it off of slow disks or re-compute them, respectively. And that is a good thing. Why should it prematurely free that memory? If you don’t want it using as much memory, tell it to use less memory. Useless? No. This is absolutely a feature and a correctly implemented one at that. Sorry, Twitter person.

Database Engine Tuning Advisor (née Index Tuning Wizard). Back when I was a SQL Server newbie I needed to make things fast. The only problem is that I had no idea how to make things fast. So I clicked around and there was this wizard that said it would analyze my workload and tell me what to do. How cool is that? I tried it and it suggested all sorts of interesting things, which I did. And then a year later I knew a bit more and was kind of embarrassed that I’d created some of these ridiculous artifacts. And then a few years later Microsoft gave the thing its kind of downgraded new name—it’s no longer a full-on Wizard, now it’s merely an Advisor. But here’s the thing: I want my advisors to give me good advice, not wreck my database. So I’ll go elsewhere for advice, like for example my brain. Thanks.

DBCC PINTABLE. The guy who doesn’t like SQL Server not freeing allocated memory would lose his mind over this one. A long-gone feature at this point, it specifically marked tables such that their data would be infinitely held in memory. (I can’t recall what was supposed to happen under pressure.) As it turns out this is pretty useless given the way SQL Server’s least-recently used cache aging algorithm works. So yeah, useless.

Virtual Interface Adaptor (VIA) Network Protocols. I don’t even know what this is, I just wrote commentary on over 20 other things, and I’m too tired to read up. So sorry, Gianluca Sartori. Tell us all about it in the comments, if you would.

Mirrored Backups. Another suggestion by signore Sartori. I have no feeling on this one way or another, but it never seemed like taking a backup and then copying it was all that much of an issue so I don’t really see the point.

SQL_VARIANT. Long ago I did some work in Visual Basic and quickly became annoyed with the fact that I never knew what type my variables were, or even whether they had been properly initialized. Welcome to the joys of variable data type systems. I was able to work around this somewhat by using Option Explicit, but now let’s think about the difference between your application—which needs to deliver data to your users—and your database—which is expected to store, master, and protect that data. Using a variable data type in the application is annoying. Using it in the database? That’s downright scary. That means you don’t know your data. That said, this data type has been useful over the years in very special cases, such as functions that need to be able to seamlessly return various different types of data. As a matter of fact, Microsoft used SQL_VARIANT in SQL Server 2016 as part of the context data enhancement. So useless? No. But use with extreme prejudice.

So that’s that. The complete list from Twitter, annotated. Got more? Want to argue, agree, or otherwise? Comment away.

HOW DID NO ONE MENTION THE CLOCKHANDS DMV?

great post. When I saw Klaus was involved with this "competition" I immediately wondered where SSB would land on your list…consider he wrote a/the book on it. Seriously though, wouldn’t it be nice to have asynch/await (node calls it callbacks) for long running stored procs without using sqlagent or something out-of-band? Instead we get SSB which is some kind of goofy RabbitMQ implementation in the data tier that doesn’t support the most common use case…fire-and-forget (apologies to Remus Rusanu). total abomination

–dave

Just to clarify on my BPE comment–that’s an MS recommendation, that I’ve never seen in use.

VIA network protocol – gigabit transfers back in 2000!

http://sqlmag.com/database-performance-tuning/comparing-virtual-interface-architecture-network-adapters

@Erik: Hahaha I recall someone I knew wrote an article called “Lord of the Clock Hands” for SQL Server Professional magazine. The magazine is long gone but I have the article in print if you want a copy 🙂

@Dave: All sorts of async stuff would be GREAT to have in SQL Server. But what most people really need is just a simple queue type thing that looks like whatever arbitrary table shape they want (no XML, no contracts, no service) and doesn’t concern itself with transmission or anything else beyond “give me the next thing.”

@Joey: Thanks for the clarification! Someone trying to justify the thing?

@David: Cool, I guess? I’m too fried after writing the post to grok what that means at this point 🙂

Ran across one instance where a company didn’t have a DBA but had various sysadmins. One decided that they would DBCC PINTABLE the largest tables in the system so it would run faster. That server ran out of memory within the day and kept crashing until it was rebooted and we turned that option off. 🙂 I kind of get the idea in general when you had lower memory systems and less was cached, but you really needed to know what you were pinning and why if you were going to use it.

I remember turning on the MDW _once_. Worst mistake ever. I think I killed all of its related jobs within a day or two because it was pretty bad in practice. And yes – that left lots of hooks that didn’t go away until the system went away.

As for English Query, it had potential, but was ahead of its time. Taking a look at PowerBI, I see the evolution of English Query into something that is being shown quite a bit to ask questions and get answers. Whether it’s going to ultimately be useful or not, I can’t say, but with people now speaking to their phones and computers as a more normal thing I can see it being used more.

Scalable shared databases 🙂

AutoClose was there for a reason other than what you stated. It was for SQL Server CE running on Windows handheld devices with extremely limited memory.

Also Uninstall isn’t useless at scale.

First of all you need it if you ever in-place upgrade a system, in order to get rid of the leftover (and still running) SSIS engine.

But secondly it’s an "I’m not paying for this" tool. If you have SQL Server running on a server and disable the services – you may still be held liable for the license (depending on your agreement). Uninstalling is the only way out other than discarding the server, which again isn’t always possible in large environments because there’s usually other software running on it.

@Peter: Ouch x2! Regarding NLP, I agree that it’s a very useful and applicable thing today. However, I question where the work should be done. i.e. should SQL Server be responsible? Why not have an app server render the language into a query and then submit it (perhaps doing a bit of additional post-filtration as necessary)? Seems more scalable and less expensive that way.

@Jay: Nice one!

@Cody:

Auto-Close has been in the product as long as I can remember. (SQL Server 7.0, I believe, but definitely 2000 at latest.) CE was released, IIRC, with Visual Studio 2005. Correct me if I’m wrong on the dates. But it seems to me that CE leveraged the feature a was already there; the feature was not created for CE.

As for uninstall, I’m certainly not an enterprise admin type guy, but wouldn’t scale only exacerbate the problem? You have your nice uninstall script that works when you test it on one server, then you roll it out to all of the others and some little thing is different and it breaks across the enterprise…there goes your weekend. At least that’s the way I imagine it playing out.

SQL Uninstall, like most of the uninstallers that Microsoft creates, craps all over its own bed. I can’t count the number of times I have been literally forced to go in and do a brute-force rip out of an entire product.

@Cody or anyone else:

I was curious about the implementation of Auto-Close in SQL Server Compact Edition, so I googled around for documentation — but I can find no links that mention it in the context of that product. Can someone share a reference?

Only if you autograph it 🙂

@erik… I think there might be a lot of competition if Adam opened it up to useless DMVs as well. But individual DMVs are not technically ‘features’. Many DMVs are useless. When the feature of DMVs was introduced, each group within the SQL Server team was mandated to come up with 3-6 DMVs in their area. But they were not mandated to make sure there was any practical use for the information they returned. And there was no requirement that the DMVs be fully and meaningfully documented. How many DMV column descriptions just echo the name of the column?

But the feature of DMVs, in general, is totally awesome, and incredibly useful!

~Kalen

I see things have not changed at MSFT since I left 25 years ago. Ahem.

I am surprised no one mentioned SQL Server Wen Services: Introduced in SQL Server 2005, and quickly "ripped out" in SQL Server 2008.

@Erik: Well bad news, I was mistaken and that article was actually "Lord of the Ring Buffers." Which is so much cooler. And also I don’t have a copy of it. But I did find three copies of my supercool first-ever print published article, "Using Server-Side Traces to Detect Unused Objects." — http://bit.ly/2sCaFY0 — I can sign it with one of those gold pens they use for footballs and it can be yours for, let’s see… $15,000 seems fair for a collector’s item like that, right?

@Kalen: Agreed, DMVs rock! But none had such a tempting name as "clock hands." What are your top three most useless, just out of curiosity?

@Niels: I think you mean "Web" because I have no clue what a "Wen" is 🙂 … But wasn’t there some kind of web thing all the way back to SQL Server 2000? sp_makewebtask or something like that? I don’t really remember the 2005 thing at all!

@Adam: Yeah, I meant Web. I am not sure about sp_makewebtask, but I remember how you in SQL 2005 could set up an endpoint (the same syntax as for SSB endpoints) that would then be exposed as a web service endpoint. I had great fun ridiculing this during our SQL 2005 training courses.

@Niels Oh yeah! I remember that now! That was kind-of sort-of cool and REALLY useless. I vaguely recall some strange conventions and limitations. And who was it intended for? Any developer could easily roll a better web service in .NET in a few clicks, and anyone who needed a web service would probably be … a developer.

Coming up next: SQL Server Hall of Fame?

Not a feature but I’ve always had a softspot for the syntax SET FIP_FLAGGER …

Hi Adam,

Interesting list!

I can’t speak much to the Integration Services connection via SSMS – I don’t use it. I haven’t needed it for SSIS development or administration. It could be that it’s useful and I’m just unaware of how…

:{>

EOMONTH() is missing! I would rather have had a BOMONTH() function. Or that they had spent this dev time fixing the “String or binary data would be truncated” error

@ErikEJ: The rest of the product 😉

@Steve: Not sure what you mean about the syntax. And I’ve never used the FIPS Flagger so I’m not that familiar. Care to elaborate?

@Andy: Interesting — so perhaps it really is yet another useless spot?

@Aaron:

I was dubious about EOMONTH when I first heard about it, but at this point I use it all the time. I agree that I’d like BOMONTH too, but many projects model "Month" as the last day of the month, so in my experience EOMONTH is amazingly handy.

The string or binary error is certainly the most egregious long-time Connect item and general user annoyance, by far — but I’m not sure we can call it a feature 🙂

Object level restore. Didn’t stick around long for a reason.

@Henry T: That seems like a useful feature on the face of things…but I somehow missed it completely! When was it in the product?

My thoughts on some points in your post:

* Auto-grow. Totally agree with you. I usually compare auto-grow to the airbags in my car: a never want to need them, but still prefer having them over not having them if I do run into a situation where they trigger.

* Service Broker: Definitely not a useless feature, I have used this multiple times for some very intereting solutions. It is just badly implmented (i.e. terribly over-engineered). My solution to that is to carry some standard scripts that do all the hardwork of setting of the bazillion objects needed, so I can focus on the relevant parts.

* DBCC PINTABLE: My understanding is that feature has been removed a long time ago, back in … oh wasn’t that SQL Server 2005? MS didn’t want existing code to error so the syntax is still supported, but it has been re-programmed to do exactly nothing.

FIPS Flagger: Intended to warn you when you use proprietary syntax, in case you are trying to build fully ANSI-compliant code for easy portability.

@Hugo:

Yes, PINTABLE was removed or, I guess, disabled in 2005. I didn’t realize the DBCC command itself was left in the product but I just tried in 2016 and there it is. I’m kind of shocked that it’s still hanging around. Why would they leave it?!?

FIPS Flagger is something I hope I never have to use. Portable code == massive pain in the nether regions 🙂

The SSMS connection to SSIS is mainly there to confuse everyone and put off DBAs from allowing use of SSIS. When it was new I recall DBAs thinking they had to give their ETL developers sa just to use SSIS.

I use JSON for logging API calls. Typically, this is data that is low value and is only needed for auditing or troubleshooting purposes. I log the entire JSON string into a JSON field and I don’t need a new table for each endpoint, I don’t have to figure out what to do with nested objects, and I don’t have to worry about backward compatibility when the data returned by the endpoint changes. Also, it’s not unusual for RESTful APIs to return responses with different schemas so I can shove any type of response in a column and I don’t have to worry about which type it is. Sure, I can do all of these things with a text or ntext, but JSON gives me more flexibility if I ever need to query the data and find something specific.

How about SQL Server Transaction Savepoints?

I have not seen these to be used in real projects (at least with success))

@Andrew: Well I guess that’s yet another vote for (against?) that feature…

@Shelly: Interesting use case! Agreed that for something like this it’s not a bad idea to store it in its native format. But I guess the question is, did we *really* need a full feature to support the occasional ad-hoc query against such data?

@Alexey: Ooh, great one. The fake nested transactions have always been a questionable feature, at best. I’ve seen them used in real projects on several occasions — never with any success at all! Usually used by someone who thinks it will create an autonomous transaction and then doesn’t bother actually testing. Real autonomous transactions would certainly be a great addition to the product…

I find SAVEPOINT very usefull, it lets you rollback the certain part of transacton, and continue under the scope of “global” transaction.This ability is certainly can save you nether regions and simplify your life. The sytntax is simple and readable, no “overingeneering”

Beside that in Oracle your have abut the same, and part of SQL ANSII standartm, and Oracle dev team are doing less stupid things then SQL dev team, sorry to say 🙂

Great stuff! Could you elaborate on the "create fake conversations to make it go faster" thing?

@Alex: You can read up on it here: https://msdn.microsoft.com/en-us/library/dd576261.aspx

Basically the idea is to reduce latch contention by filling data pages such that there is only one actually-used conversation per page. Which is incredibly clever but the fact that as an end user I need to be incredibly clever to get good performance indicates (to me at least) that something is not quite right with the design…

EOMONTH returns midnight on the last day of the month. Which means you can use BETWEEN if you are only ever using DATE data types; for all other data types, you need to use a different type of query and EOMONTH() becomes completely useless. I prefer consistency over having this one exception where you can use one method but you need a different method everywhere else.

It’s far easier to find the beginning of the next period, and always use >= [start of this period] and < [start of next period]. This works across all data types (including future changes you don’t foresee today), and doesn’t need any tricks to find the true "end" of a period.

And I wasn’t saying the string or binary data error message was a feature, just that fixing it would have been a better and more productive use of engineering time.

What is the exact problem of doing EOUMONTH(CAST(‘20190909’ AS DATE))?

Probably i misunderstood u, sorry if so

@Aaron: Totally agree that avoiding BETWEEN for most date/time comparisons is a best practice. I’m lucky in a sense as most of my work these days centers around DW type projects. Very rarely is a time component carried in; the data is almost always aggregated at least to the day if not the month. So in general I deal with only DATE and I don’t have to worry about a lot of these edge cases.It’s really interesting to see the various mixed perspectives on many of these features. “One man’s trash…”

What is the exact problem of doing EOUMONTH(CAST(‘20190909’ AS DATE))?

Probably i misunderstood u, sorry if so

I’ll chime in as a vote for the usefulness of connecting to SSIS from SSMS. I’m not a developer DBA, just a production DBA and I use it when we migrate our customers to new servers.

I can connect to the SSIS instance, then export the packages over to the new instance. Saves the developers aggravation ("What do you MEAN we have to load all of our packages?!?") Of course, they still need to go in and often correct their connections, but such is life.

So, not really a "useless" feature, but I’ll grant an almost "edge-case useful" feature…

What is the exact problem of doing EOUMONTH(CAST(‘20190909’ AS DATE))?

Probably i misunderstood u, sorry if so

I am the same position too, and i am a lot in the SSIS thru SSMS connection, to export and imort stuff, see the stuff, see runing packages. And all the developers trained to do the same on the DEV servers.

@Jason: Thanks for sharing. So it’s the perfect feature for developers who hate change control?

How is managing SSIS directly related to source control?

Beside that, the people may like or dislike stuff like SSMS or source control,

but there are certain situations when both things are useful,

and other are just not present like or dislike it..

My friend Kevin Hazzard refers to JSON as "hipster XML."

;{>

@Andy If JSON is hipster XML, then does that make XML hipster CSV? And is CSV hipster flat file? And before that? Punch cards?

Parameter sniffing? I’ve had awful production issues where parameter sniffing was the root cause, and any workaround to overcome to this adds tons of boilerplate.

Surely Policy Based Managennt (PBM) deserves to be on any list of useless features? Probably it is such a sad little offering, done merely to put a tick in the box, that you’ve not used it.

I felt PBM(Policy based management) was not useful as advertised. Didnt try it in later versions though

@Daniel: I understand your pain but in my opinion parameter sniffing is a pretty good feature. It’s just that the general problem of plan caching and wide parameter variance is a very tough one to solve. At this point there isn’t much to add to overcome the issue on a case-by-case basis. Take a look at OPTION (RECOMPILE).

@Phil: PBM!!! Wow, yet another feature I’d almost completely forgotten ever existed. That’s a really interesting one to think about. It’s a good looking feature on paper and I remember the excitement when it came out — it was going to change everything! Of course trying it out and experiencing the clunky interface and myriad limitations deflated the bubble significantly. And then there is the reality of the situation: It’s a feature only large enterprises could ever possibly need. (And only if they have the worst possible change control practices.) And large enterprises have such varied systems that they can’t possibly control them using policies. In hindsight this is so painfully obvious. How did this feature ever make it past the planning stage?

@Dinesh: Adding to what I already mentioned in my reply to Phil, PBM is yet another of the wonderful "let’s release v1 and never touch it again" features. We’ve yet to see any kind of "later version."

Quick comment on PINTABLE – I’ve got a vague memory of this being an old Sybase command that was ported across to SQL Server – is this right ? It is true that pintable was changed to do nothing – Paul Randal bloggrd about it here – https://www.sqlskills.com/blogs/paul/dbcc-pintable/

@Adam: thanks! Wow that "150 trick" for Service Broker is so trippy, ahahaha. I completely agree with you, something is seriously wrong here.

MDS still requires Silverlight to be of any use! SCD component in SSIS only does 80% of the job, it’s slow, and it only has a destructive edit. SSMS *still* doesn’t have folders in its SQL Agent job view. Job Categories are cool except they don’t do anything. and finally… the train wreck that is SSDT. Will it install? If it does will it break anything else? What’s it called this week?

please add the useless primary & secondary xml indexes, they over bloat the table storage and actually make xml queries slower if we use them!

I want to believe that In-Memory OLTP is the future and that all DBs will be memory-optimised one day. It is the only addition to SQL Server that I have seen that promises such a massive performance increase with a reasonable chance of delivering on it.

However, I was very disappointed with In-Memory OLTP in SQL Server 2014. The reality just didn’t meet the hype. So many workarounds. No FK support and so on. I’m going to try it again later on in the year to see if the added features in SQL Server 2016 make it usable.

Sean – You know, I tried to implement MoT and face-planted on the concrete with it. Nevermore. Nevermore.

Database Diagrams. And the fact that it’s the first object under the database name in SSMS – which causes it to prompt "..do you wish to create them?" – when using the arrow keys to quickly navigate the tree.

I just let it create them every time so it won’t ask me again.

Although the article is great, MDS should not be on te list. Specially 2016 MDS has become a enterprise grade tool, if we forget the use of silverlight. Most of the bugs and faults are addressed in the 2016 version. And multiple customers now run there communication and configuration between the business and BI solution using MDS. For the IT department it is proper tool to manage BIML scripts, set row-level security and a SQL Server managed and operated CMDB.

I love SSMS

@Steve: No clue on Sybase. Ancient history at this point!

@Alex: Yeah, it’s insane…

@Nick: I didn’t realize about Silverlight. That’s… Ugly. The SCD component is never something I’ve gotten to work in a reasonable fashion. ELT FTW! I can’t say much about the Agent stuff. Not a heavy user. As for SSDT, I feel that it’s gotten a lot better in recent releases. I’ve been heavily using it on a project for the past year and I’m fairly happy. Maybe not 100% happy, but I’m not sure that’s possible at this point in my life 🙂

@michael: XML indexes are something I absolutely hate in every way. Not only are they an excuse for people to create bad designs, they create a downstream issue: Because of the crap way in which they were implemented, the indexed XML structure cannot have a nesting depth of greater than 255 (IIRC). In order to “fix” this problem, Microsoft went ahead and limited the nesting depth of the ENTIRE XML TYPE, WHETHER OR NOT IT’S INDEXED. Why does this annoy me? Because many complex query plans — which are of course in XML format — often far exceed this arbitrary nesting depth and we have to play games to see the plan if collected from the DMVs. Awful, awful, awful, stupid design choice to help with a feature that very few people use. Okay, rant mode off.

@Sean: 2016 is definitely a huge improvement. The problem, though, is that “massive performance increase.” It’s just not real for the majority of workloads. Which is fine — but you need to know what you’re getting yourself into (or not).

@Hugo: Database diagrams, a feature everyone loves to hate. It’s definitely saved me once or twice, so I can’t exactly criticize it. But the feature set is so very limited, they don’t print well, and WHY does it need to create database objects!??!

@Remko: Thanks for the feedback in support of MDS; I received similar via Twitter. I’ll have to take a second look.@James: If you, like me, spend 8h a day in there, how can you not? If I didn’t like it I would totally have to find a new career.

Can add Streaminsight to the list. CEP tool that has nothing to do with database and probably found its way into SQL Server bundle by mistake.

Broker is the most annoying feature from my point of view – really great one, saves a lot of time for asynchronous processing (otherwise you have to write intermediate data into some table and create jobs that will query it), great scale-out feature too. But really cumbersome architecture. Attended few usergroup sessions where lecturers tried to explain SB to the audience in 1-1.5 hours. Every time ~30 minutes into the lecture 90% of the audience were deeply asleep. No UI. Poor troubleshooting capabilities. I have a client for which I’ve built partly asynch system based on SB some 9 years ago. It still works with no maintenance at all but 3-4 times during these years due to some misuse SB got stuck. And although they have few DBA teams, they called me to do a troubleshooting.

What I don’t understand is In-memory OLTP. Why do you think it is useless? When you have to support, say, 20-30K OLTP-style transactions per second, memory-optimized tables combined with natively compiled stored procedures and sometimes – when possible – delayed durability do a great job. I’ve built demo on my laptop with a real client’s case – in this demo I did 10K transactions/seconds. On a laptop! What are those "much better and easier ways" to do the same without in-memory OLTP?

I haven’t seen "Managed backups" on this list. It needs at least standard edition, so isn’t serving express edition consumers. Especially since maximum retention period is 30 days.

How about the feature allowing you to create a ‘group’ of stored procedures, each of which is defined by ‘;N’ as part of the name. So ‘uspProc’ and ‘uspProc;1’ are two separate procedures, but only ‘uspProc’ will show in the SSMS tree.

@Michael:

While I agree that StreamInsight was improperly packaged and badly managed from a product perspective, I’m on the fence about whether or not that makes it “useless” per this list. I guess if we’re strict about saying “most useless feature of SQL Server” then it could apply. But it seems like a very useful thing in and of itself.

Regarding in-memory, first and foremost, what does 10k TPS even mean? If we’re talking about updating some tables 10k times a second, that’s easy to achieve. I can do that on my laptop, and much more, with or without in-memory technologies. Scaling a real workload may be much more difficult but it depends on how it was designed. I worked on several 10k+ workloads, 10 or more years ago. We didn’t have in-memory technology, we had less memory than I can currently get on my laptop, and we made it happen.

You’ve taken my comment about “better and easier” out of context. I was referring purely to native optimized stored procedures and functions. And yes, I can do a better job in a variety of ways: I can tune my code so it runs less procedurally; I can re-design things so they happen on the app tier; and I can leverage SQLCLR. I find the native optimized performance gains to be wholly underwhelming in light of what I can already do without it. Mileage may vary for people who have different levels of requirements and/or tuning skills, but the point is, it’s simply not the panacea that marketing makes it out to be.

@ALZDBA: No opinion on that one from this end. 30 days seems a bit limited but I’m not sure what the supposed benefits are for that feature. Lack of Express support doesn’t bother me personally. I’ve never seen any app that used it take a backup. (I’m sure they exist, just not something I’ve ever witnessed.)@Stephen: You win the “most arcane feature to be mentioned” award 🙂

I’m w/ Aaron on the need for a "Beginning of Month" function. We’ve used something like that _far_ more often than we’ve ever used an "end of month" function. It’s more consistent in day numbers – the "day" portion is always 1. 🙂

Lightweight Pooling / Fiber Mode

Totally used this in production on exactly one customer.

Before Windows 2003 rework on the kernel it was pretty much the last trick in the book to reduce context switching on heavily loaded servers. I’m talking servers with 500k to 1M context switches per core.

This was really put in to help the TPC-C benchmark where you have a high frequency of small queries and use NOTHING ELSE in the system.

After Windows 2003? Never.

Filtered Indexes is another pretty useless feature. A smaller index based on a subset of data sounds great, until you realise it doesn’t work with parameters.

@Peter: As I said, I’d like both! In the meantime, beginning-of-month is still quite easy to implement using the tried-and-true truncation method: DATEADD(MONTH, DATEDIFF(MONTH, 0, GETDATE()), 0)

@Wes: That’s a lot of switching! How much better did things get after turning on the feature? I guess if you have tiny OLTP queries and nothing else that feature won’t breach much…@Alex: Sorry, can’t agree with you on that at all. I use filtered indexes all the time in my designs. It’s quite common to see cases where queries, for example, only use data where the “status” column is NULL, or where the is_current flag is 1, or whatever — and those can be safely hardcoded. Filtered indexes are also really useful for creating a unique constraints that work per ANSI (NULLs don’t count). I find that used prudently and creatively, filtered indexes add a huge amount of flexibility to my indexing schemes and help me store a lot less data than I’d have to if I used the filter columns as index keys.

pingback @ https://natethedba.wordpress.com/2017/06/17/stupid-defaults/ =) Thanks for the inspiration Adam!

I don’t agree that sparse columns and mirrored backups are useless.

We use sparse columns in some of our larger flattened reporting tables and it helps tremendously with disk space.

Mirrored backups are good for exactly 1 situation, which isn’t important until you get stuck in that situation. If you write backups locally and you don’t mirror them off-server then if you have an outage where the host goes down you lose your current transaction log backups too. Granted, this isn’t the best way to back your data up but plenty of clients do this so I suggest mirroring them to a share. You could accomplish copying them with other tools outside of the MIRROR TO option as well.

@NateTheDBA: Nice one!

@JohnnyDBA: Yeah, I hear you on the disk space for large flattened reporting tables, but now the architect in me needs to question why you have large flattened reporting tables and whether they couldn’t be re-designed? See, there’s no way out here 🙂

Thanks for the mirrored backup feedback! Good to know.

sp_makewebtask – allowed refreshing of “static” web pages with database contents. No idea why this was ever considered a database feature.

Don’t remember the feature name, but the ability for SQL Server (7.0 era) to receive queries by email and respond by email with the results.

“Workstation ID” keyword in a connection string to allow you to spoof useful data.

Scrollable cursors. Is there really a need to go back to rows you’ve already seen?

Named default constraints on tables. I bet nobody uses sp_binddefault anymore.

System named default constraints on tables. Please don’t make it so hard to compare DDL scripts.

Shared schedules on SQL Agent jobs and scripting out the schedule_id guid for all jobs so you end up with shared schedules accidentally.

The whole mess of quoted identifiers and different “default” defaults in various places between clients and server.

RE: PINTABLE – yes, this was from Sybase and existed in the days when every byte of memory was precious. It lives on in the same sense that sp_configure ‘allow updates’ does, which is to day it does nothing.

RE: Mirrored backups. Definitely should not be on this list. Creating a backup and then copying it is not a substitute since it inherently (albeit briefly) creates a single point of failure.

@Bob:

Wow, quite the list!

Agreed, sp_makewebtask was a joke.

That email thing actually sounds really cool in a useless sort of way 🙂

There are use cases for visiting rows you’ve already seen in some bin packing algorithms and similar but … should you really be doing any of that in SQL Server?

sp_bind* has been deprecated since 2005 IIRC. And I was so very hopeful we’d get some ANSI features (DOMAINS?!?!) in their place. Instead, Nothing…

BPE is very useful with SQL 2016 Standard and a fast PCI-e flash card. Beats local SAS SSDs for latency and consistently keeps 300GB of "L2" data very proximate to real memory (avg read time 2202 microseconds, with observed multi-GB/sec sustained throughput). If we didn’t have BPE, we would be forced to Enterprise by the need for more RAM, but couldn’t afford anything like the 2×12 cores that Standard now gives us. That 128GB Standard threshold isn’t as restrictive when you add BPE. At least, that’s our experience with it over the last 6 months. IMO, obviously. Useless? Negative. Use-case; real. Savings/month: $2K less than a leased, lesser, Enterprise SQL box.

@SAinCA: Interesting. What kind of workload is this, and what kind of PLE numbers do you see? It sounds like you’re hitting a large amount of memory churn and I can understand why it helps in that case, but I wonder if such behavior is a sign of a less-than-ideal data access design? I’m generally not a fan of "just throw hardware at it" approaches, although in this case it sounds like it’s working for you. (Correct me if I’ve totally misinterpreted the situation!)

MDS was a Microsoft ‘knee jerk’ reaction to competitors products so lacked a bit of TLC. 2008 R2 version was next to useless but I used 2012 to help an organisation maintain a set of reference data and cross-reference tables. Any other option would have required a third-party product or a the employment of a programmer. Did the job effectively but only within a well-defined process – no easy way to automate.

So I found it definitely useful but requiring more features to integrate it with the SQL database operations (e.g. run an MDS task as an SQL agent job.) I have not looked in detail at the 2016 version but, if my current organisation upgrades, I would definitely be looking to use it.

Haha, that was a trip down memory lane! Thanks for the amusing reading Adam, and those who commented. Made me fire up a VM with 6.5 and browse through BOL – many things are no longer there… 🙂

Very interesting.

For me, auto shrink, performance data collector and xml indexes are the most misleading and dangerous features. Other features are mainly not useful as advertised.

I remember when 2005 was introduced, the guy spoke a lot about xml indexes and a friend of mine went and implemented it in a product. 7 years later, he painfully removed it from his product.

I have tried auto shrink in my early days and cried for using it. I am wondering why it is still there?

Interesting to see that no one has mentioned about any features of replication (a great feature, even though many replication features are disappearing now, for reasons MSFT only knows) or Resource Governor. I remember when resource Governor was introduced, Oracle criticized it saying it will not serve the purpose.

I’ve found Service Broker to be far from useless. We actually use it to keep data in sync between shards and our core databases. We’re ok with eventual consistency and in my opinion its a lot simpler than using replication.

@adam… top 3 most useless? There are 215 DMVs in SQL Server 2016SP1. I have used maybe 60 or 65. So that leaves 150 that I have never even used. So how can those be ranked into MOST unused? I know, unused is not exactly the same as useless, but for my personal practical purposes, since you’re asking for my personal opinion, they might as well be.

To truly find the most useless, we’d need some sort of poll and find ones that NO ONE ever used. But I don’t have the bandwidth for that.

@Nick: Interesting, thanks for the feedback on that.

@Tibor: Anything cool from those days? I played with 6.5 for all of a week, at the very beginning of my career, so I don’t have any solid memories.

@Prithiviraj: Good thing no one mentioned RG, since it’s quite useful 🙂 … Replication? Well I think it has plenty of use cases but needs a MAJOR overhaul.

@Brandon: Hard to say it’s simpler than replication, but I guess it depends on the topology. Either way, agreed that it does some good stuff, albeit in a fairly convoluted way.

@Kalen: Nice dodge 🙂

How about Native XML Web Services for Microsoft SQL Server 2005?

Nobody used it and it was discontinued. I guess that the fact that SQL server licenses per processor are so much higher than for Windows server had something to do with it.

In memory optimized tables is not a useless feature. I got 7 times increase in performance for inserts into a work table. The design was sub-optimal, application passing parameters via a hot spot table. But it is what it is; it needed optimization and this was the way to do it. Inserts were the bottleneck. Sometimes you have to deal with other people’s design and do whatever can be done with all restrictions of real life. (The same result could have been achieved with multiple values insert, but the ORM could not do it.)

Anyways there are many articles about anti-caching. A must read before tossing this feature into a mental trash bin.

@TiborKaraszi – I have VM’s of everything all the way back to 4.21, but they’re at home, not here in the office. For the history buffs, 4.21 didn’t have SQL Agent at all. Everything scheduled in Windows scheduled tasks!

Adam, do you mean native compilation instead of native optimization? They really don’t mean the same thing.

@Dragan: There are certainly lots of opportunities for better scalability with in-memory features. But it’s still a somewhat limited area in my opinion.

@Kalen: As far as I can tell — I just did a Google search on both — they’re interchangeable, but "native compilation" is certainly a lot more commonly used. So yeah, that’s what I meant.

I agree with Dragan, in memory is a very useful feature. VoltDB has a lot of white papers about how to use this sort of databases. They even call it transactional streaming(or something like that). You can easily get half a million transactions/second with an affordable machine.

I vote for Auto-Shrink.

Cross-database ownership chaining gives me chills, but I know it’s used a lot by third party applications.

Don’t agree with UCP, MDW, DQS and MDS. I used a lot UCP and MDW when a company didn’t have budget for the monitoring tools. Used UCP for the capacity planning.

MDS and DQS are great, but you need to "sell" it to the business.

@DoM: Half a million transactions a second is certainly impressive. But how many database instances, worldwide, really need to handle that kind of load? So sure, it’s useful, but again, quite limited in its scope at least today. Perhaps IoT and similar will change things over the next few years. But I don’t think we’re there quite yet.

@Svetlana: Your tolerance for jumping through hoops is much greater than that of most of us 🙂

@Adam Machanic: Oh yeah, and developers stuck with packages created by people who aren’t around anymore where the only copy of the package besides the one on the production server was on the previous users desktop PC, which was replaced two years ago…

@Adam Machanic: Sorry for keeping mentioning Dr. Stonebraker’s VoltDB, but some companies need this speed. That database engine is In Memory only and they have clients. They even propose an architecture that looks like client/server(or master/slave) from the early 90ies(pretty sobering situation 🙂 ). You get better than nosql systems speed without the "eventual consistency" they are plagued with.

@Jason A: Haha, been there a few times …

@DoM: Yes, agreed, some do need it.

I do not agree that native compilation and native optimization are interchangeable. Just because a Google search shows people using the term incorrectly does not make it ok and meaningful.

I look like a troll mentioning this name. Not my intention. Sorry for that.

SSIS’s File Connection Manager. If I recall correctly there were literally zero Tasks or Components in the box that could make use of it.

How about CREATE ENDPOINT https://msdn.microsoft.com/en-us/library/ms189882(v=sql.105).aspx & https://msdn.microsoft.com/en-us/library/ms181591(v=sql.105).aspx? I seem to recall thinking it seemed quite useful but don’t know of anyone that used it and it was swiftly jettisoned.

Great post Adam!

I am surprised that the SEMICOLON was not mentioned so far.

We’ve been brainwashed by Microsoft lately that it is an unavoidable syntax requirement. That has been proven false by experts such as Bertrand Meyer, as well as by many programming languages (including Microsoft’s own VB) that are powerful and easy to use without burdening the developers with unnecessary syntactic load.

Since Bill Gates "left", Microsoft is getting lazy: they dropped their older mantra (to produce easy to use languages) and, instead, push the burden onto the users / developers. That is part of the same trend that favors C# instead over Visual Basic (even in SSIS script controls, effectively rendering this SSIS feature inaccessible to most SSIS devs).

The decades-old history of C-like languages shows that each additional syntax element leads to increased error rate for developers and I’ve witnessed that time and again.

Instead of blindly accepting such imposed syntax from Microsoft we’d be better off asking Microsoft to continue producing easy to use languages.

If we fail to do that, the semicolon is just the beginning: next we’ll be told that CURLY BRACKETS are unavoidable and mandatory in T-SQL!

Good luck with that 🙂

Interesting that Stretch Database hasn’t made it into the list – its charming resume includes ‘looks great in marketing’, has potential but is so restrictive in practice that it is unlikely to be implemented outside demos. It’s a V1 feature which may just make it to a brighter future but right now is pretty useless.

SQL Server 2000 object dependencies. They never worked!

Ah, the uninstall. It seems pointless until you need it. The usual case was when we had a server with multiple instances on it, and one or more of them got decommissioned but others could not. We just switched them off and it worked fine for years, until those offline instances started popping up on security audits (they scan for dll versions so doesn’t matter if instance is actually up or down). Uninstalling them seemed like the cleanest option – after we realized how much pain it is to explain to non technical security people what it means that instance is down.

Of course it wouldn’t be Microsoft, if uninstall just worked. The problem was that all instances were using LUNs, which were detached from server and deleted long time ago (sometimes many years). And SQL uninstall needs some files from those disks, which cannot(?) be just copied from other instances.

Also, sometimes a server owner would delete the folder where windows keeps all the updates because disc C was low on space and *surely* this data wasn’t required. Except that doing so will make SQL Update fail (needs the files), and as it happens, uninstall will fail as well because it is using same process.

So usefull – yes, but with issues.

@Radu: Funny about semicolon. I also don’t use them unless absolutely necessary. I don’t see the point. But lots of people saying that doom and gloom will follow in my wake. We shall see!

@donaldc: I have HIGH hopes for stretch! Some day…

@Scott: But now we have the newer object dependencies and they … well still kind of don’t work!

@BartS: I still vote for an OS re-pave! Simple and 100% effective every time.

Great article, Adam. Thanks for taking the time to put it together.

Shifting gears to the subject of Hekaton, I first need to make a disclaimer. I’ve not used In-Memory OLTP and so I may be speaking through my hat and apologize for that. That being said…

It’s my understanding that you do have to rewrite code a bit to make use of In-Memory OLTP even in the face of the recent improvements.

With that in mind, I wonder what it is that actually provides the improvements that some have seen. Is it the In-Memory OLTP functionality that provides the improvement or is it the fact that people had to follow a certain set of rules to use it?

As a recent example (2 days ago on Friday), our SQL Server based telephone system was having some serious problems with being to keep up with the number of calls being taken and doing "other necessary things". The system only has 2 CPUs and 32 GB of Ram but the "disks" are actually SSDs, including TempDB. The first reaction by those large and in charge was that the system was obviously underpowered for the given workload and were looking to adding more CPUs (increase in license costs) and memory. I wasn’t convinced.

Upon investigation, I found that a particular view was being called upon twice a minute. It joins 6 tables and one of them has a self join so 7 joins in total. Every time the view was called upon, it used 19 GB of logical I/O, took 14 seconds to execute, and usually spiked the single CPU is was allowed to use (MAXDOP = 1 for the system) to somewhere beteen 90 and 100%. The execution plan showed mostly CI scans with a couple of NCI scans to return a usual 20 or so rows of data that needed to be processed and it took the same effort to return 0 rows.

To make a longer story shorter, I repaired a non SARGable bit of criteria and removed an unnecessary "OR" from the view. Suddenly, everything went from being scans to seeks (with high performance ordered range scans when appropriate), logical I/O dropped from 19 GB to 380 MB (49 times less), duration dropped from 14+ Seconds to 123 Milliseconds (115 times faster) and, of course, CPU usage followed suit. The telephone system now sung with performance.

How did that happen? I "followed the rules" for a good query.

And that brings us full circle back to the improvements people claim about In-Memory OLTP. Is it because they were forced to change code to "follow the rules" or is it just because they used the feature?

@Jeff Moden – the performance benefits of In-Memory are derived from many facets:

1. no write ahead logging

2. the logging that must be done is performed much more efficiently

3. modifications to RANGE and HASH indexes are not logged

4. lock/latch free table and index design

And that’s not including natively compiled TSQL.

It could be that you have to touch some code, and then again that might not be required.

Many have spoken of using In-Memory OLTP to migrate temporary objects from TempDB to memory, and that’s an excellent use case.

But I would also say that if your workload is write-intensive and/or highly concurrent, it’s likely that deploying In-Memory will exhibit a performance improvement. Particularly true for tables with a lot of INSERTs, that are clustered on an IDENTITY or SEQUENCE column, where there is guaranteed to be latch contention on the last page.

@Ned Otter,

Thanks for the reply and the tips, Ned.

I can’t believe nobody mentioned distributed partitioned views. Lots of smoke but no fire there.

I remember many of these features from Microsoft courseware. I also remember teaching them with a side dish of "OK, this is a cool feature, you need to know it for the exam, but don’t ever use it."

I gotta say as a veteran of all the SQL versions since 4.1 on OS2 and a consultant for most of those years, some of those "most useless" features were, er, great for my career. PINTABLE especially. Thanks, Microsoft!

Seriously, we are getting throughput with in-mem oltp tables that I couldn’t have dreamed of with traditional tables, but you’re right, it’s for specific applications so far. I still think this is the future.

SSMS is awesome. I just wish MS would go ahead and convert the thing to 64-bit.

Database diagrams? Oy. Useless? Maybe. But letting them update the database? That makes it into the most potentially destructive feature in the environment.

Great post! Thanks for the memories.

@Don: I seem to recall doing something with DPVs on one project. Can’t recall if we rolled it to production or not. But agreed, not much action there.

@Craig: Before I took my first MCP exam someone gave me a hint: "There’s the right answer, the wrong answer, and the Microsoft answer." I passed my exam, first shot, without choosing many answers I thought were correct…

@David: I can say the same for the oft-maligned NOLOCK 🙂

What no mention of CLR stored procedures? I am a .NET programmer but have no interest in incorporating the .NET CLR into my SQL Servers, nor are most others I know.

@Paul

In my opinion CLR modules (functions, mainly) are extremely useful in the database in a number of cases. I’ve personally used them on several projects, and I’m surprised to hear from a .NET developer who can’t think of any use cases — even just simple string splitting? Keep in mind that CLR integration is not just for end users, but also for the database engine itself. Several post-SQL Server 2005 features use CLR internally for various purposes. So I think it has been without a doubt a successful foundational feature; it’s used almost universally, even if you don’t explicitly turn it on!

Quick comment on JSON, I use it a fair bit if I have n records with one column difference and pass that to the front end as they can deal with JSON easily. It is also faster than the XML functions (in my experience).

Quick comment on JSON, I use it a fair bit if I have n records with one column difference (or a set of options) and pass that to the front end as they can deal with JSON easily. It is also faster than the XML functions (in my experience).

Yes, it is in fact considerably faster than XML. The SQL Server team recently posted this showing that it’s 10x faster: https://blogs.msdn.microsoft.com/sqlserverstorageengine/2017/11/13/json-parsing-10x-faster-than-xml-parsing/

Impressive. I’m not sure, though, why they can’t circle back and improve XML processing. I think that would bring great value to a lot of existing apps where JSON migration might take many years.

Probably because most .Net developers (that i know at least) hate xml, mostly due to all the extra tags it has to deal with and there is no true standard. Would be interesting if they could speed it up, still think JSON will become the preferred method though as its faster, neater and results in smaller package sizes.

I suspect the complaint about memory management “Not Freeing Allocated Memory Except Under Pressure. ” may have come from a windows sysadmin. I have had this conversation about every 90 days for the last four years. I’ve configured max memory for sql server to leave 2-3GB free to make sure the OS doesn’t get starved, but the os admin team can’t get their head around allowing sql server to use say 13GB of memory on a VM that has 16GB.

It always goes something like, “sql server is using 90% of the memory on these servers, so we need to fix whatever problem it’s having.” Because in their experience, high memory use is a problem and that problem needs to be alleviated. We aren’t actually having problems, except when you define high memory use as a problem.

Agreed. The “memory leak” myth is one of the oldest and most pervasive in the SQL Server world.

MDS (Master Data Services) is one feature I wish Microsoft would deprecate. From a database engineering and management perspective it simply sucks, and it will be a maintenance and performance bottleneck in any ETL process that leverages it.

I’d like to see some effort put into the Database Diagramming feature of SSMS. This thing hasn’t changed a bit in 20 years. For example, the GUI could be more robust; providing better support for reverse engineering, cross database relationships, and also logical modeling in addition to just physical design.

Totally agreed on both, especially the database diagrams. Backing up a bit, how about simply a way to print the things in a decent fashion?

I just want the arranger to do a better job of arranging without crossing joins (as much as possible) and stop making diagrams that are 10,000% larger than they need to be.

native backup for huge dbs, MARS functionality, SOAP protocol with HTTP endpoint need to be added to this list. SSB is really cool feature and it is definitely still used nowadays.

What is your concern with native backup on larger databases? Are you saying that you can get better performance (or something else) using a third-party tool?

I’ve seen third party tools that are faster and compress better, but personally that introduces a risk I’m unwilling to take.

If I find myself in a situation where I need to restore a backup and logs, the last thing i need is to be worrying about a third party product on top of everything else. It would only be only saving space and/or time, and we have plenty of both. Space can be obtained, time, well, so far there has always been another second right after the last one.

English Query- I cried.

We deployed it in a very large production environment and it was quite advanced. You spent a little time associating verbs and nouns to objects and teaching it how words in your world work, and then you just let users ask it normal questions. It was really quite amazing, and I wish more people would have tried it out. I think its demise was only due to a lack of people trying it out and discovering how great it was, which was a result of MS putting zero effort into marketing it.

How about Recourse Governor (I have used very rarely) and connecting to reporting services in SSMS (I have Never used, may be I am not sure on the use cases)?

Regarding Auto-Shrink:

Can anyone explain (for a dummy) why SQL Server doesn’t or can’t have some built-in capability to keep its files as small as possible without messing up performance?

I mean, for example, is it because the concept of making files smaller means a compromise in how fast the database can get at records, so that theoretically, to run fastest it would be best to let files get huge, if you have room for them?

It makes me think it’s something like, if files are compressed they take up less space, but it requires more processing to uncompress them as they’re needed, so that may save disk space, but it slows things down by adding overhead. Is it something conceptually like that?

On the other hand (and this goes back to my primitive 1980s DOS indoctrination), as a file get physically larger it takes longer for a drive head to move around and read the data, so a smaller file (if properly organized) should perform faster.

Or is that completely crazy?

(Don’t abuse me; I’m trying to learn!)

Learning is allways a good thing. One of the best articles around is Paul Randalls, but if you google you will get an enormous amount of other people who agree on this:

https://www.sqlskills.com/blogs/paul/why-you-should-not-shrink-your-data-files/

The short answer is fragmentation within the data files. To shrink data SQL Server will transfer extents from the end of the file to open spaces it can find.

Another reason is that there is the risk that the shrink will be followed by an autogrow, then, if you are unlucky, another shrink and so on

Cursors? lol

Forgot table value functions too. Seen so many articles about not using those that it might as well be useless.

I do not agree with the JSON feature being useless, in fact it is seems to achieve what I think was a great missed opportunity in “Columnsets (and Sparse Columns in general)”.

An interesting realization http://telechargersonnerie.aciginingeo.cf Useful info. Lucky me I found your web site by chance, and I am stunned why this accident didn’t took place in advance! I bookmarked it.

I hereby nominate “Implicit Transactions” for inclusion in this list 😺.

And, let us not forgot the GOTO statement 🙀.

Comments are closed.